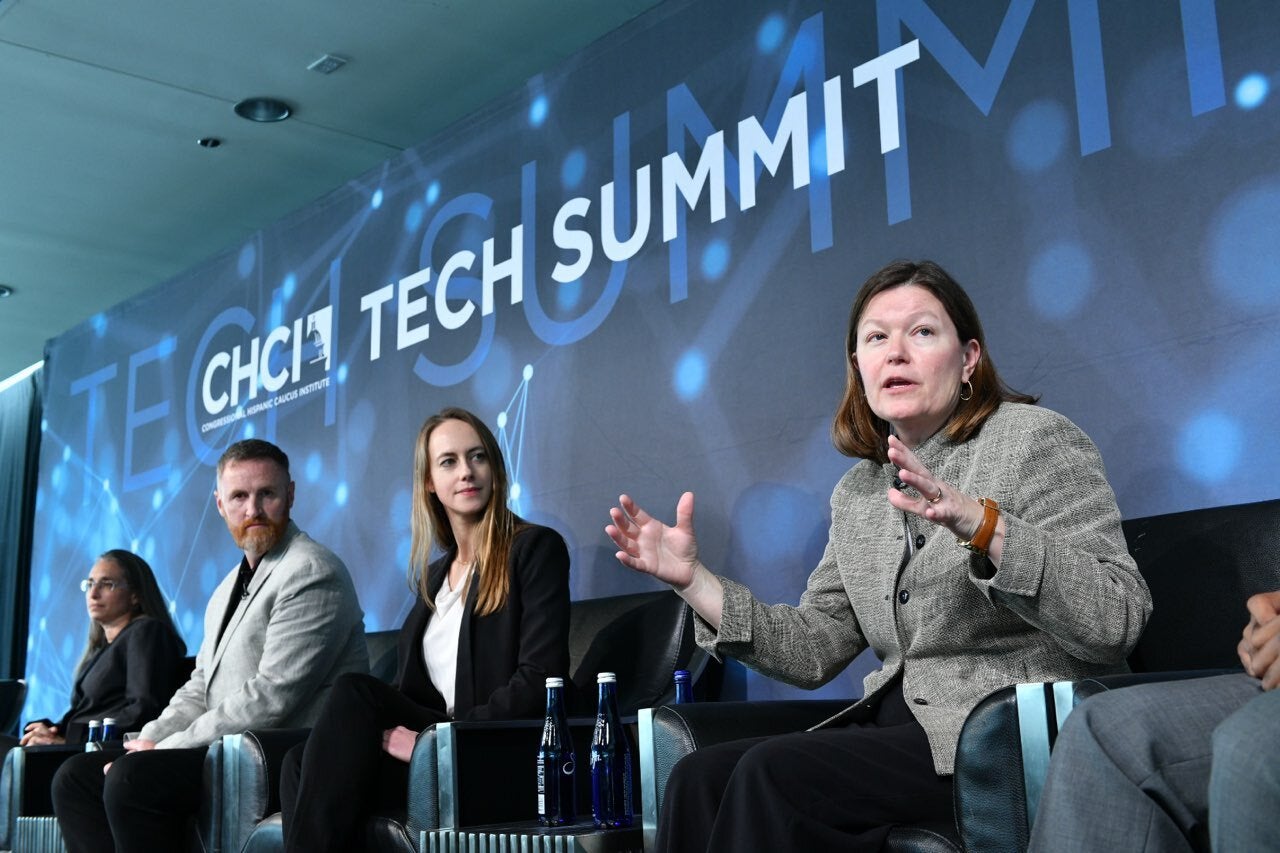

“Ethics of the Future,” a panel featuring Ethics Lab Leaders at the 2019 Tech Summit

Artificial Intelligence is Accurate, But Still Biased.

The panel, which took place on December 4, 2019 at the Congressional Hispanic Caucus Institute’s (CHCI) Tech Summit 2.0, featured Roslyn Docktor, Dr. Ian McCulloh, Dr. Elizabeth Edenberg, Dr. Dawn Tilbury, and Sean Perryman. Dr. Maggie Little, Founding Co-Chair of the Tech and Society Initiative and Director of Ethics Lab at Georgetown University, moderated the panel, which was about Ethics in Artificial Intelligence (AI).

The Summit’s goal is deepening policy stakeholder’s knowledge in technology. The panel focused on the potential biases in artificial intelligence. “Because subsystems [of artificial intelligence] are built by humans, opportunities for bias creep in, especially on the data collection part,” said Dr. Tilbury, Assistant Director in Engineering at the National Science Foundation.

The next point was the potential improvements that AI can have on human selection. For example, IBM’s AI helped increase enrollment at Mayo Clinic’s clinical trials by 80%. “AI can find insights and make predictions better than the human eye, and more quickly,” said Ms. Docktor, Director of Technology Policy at IBM.

“We have to remember that when we do talk about the potential for bias in AI, the alternative is humans. I know it’s news to all of you, but humans have a lot of bias themselves,” was Dr. Little’s response. One important consideration of AI bias concerns taking the perspective of people of color into account. “A lot of times I go to AI policy panels and there is no one on the panel who is talking about the bias,” said Mr. Perryman, Director of Diversity and Inclusion at the Internet Association, “but it’s important that you all have an interest in this as you are making policy, because your perspective is important.”

Senior Ethicist at Ethics Lab and Assistant Research Professor at Georgetown University Dr. Edenberg concluded that policy makers need to develop inclusive, ethical guidelines for AI use. The guidelines will need collaboration with ethics experts, lawyers, and technologists as well as diverse stakeholders ensuring that the process is attuned to social justice issues.

“One of the lessons going forward is that AI is not homogeneous,” Dr. Little remarked. “It’s an approach, it’s a technology, but it has lots of variations to it like most technologies.”

Original article: <https://ethicslab.georgetown.edu/blog/ai-is-accurate-but-still-biased>